| TGS insights give you the stories behind Energy data. These regular short 3-5 minute reads feature thought-provoking content to illustrate the use of energy data in providing insight, nurturing innovation, and achieving success. |

A lot has been made of the changes that seismic companies have had to make to their offshore operations to manage their businesses more effectively. From vessel financing and new technologies - such as ocean bottom nodes - to optimal data acquisition techniques, the industry never sits back in its desire to constantly improve efficiency and the likelihood of oil & gas exploration success.

However, it could be argued that the greatest changes are actually taking place onshore – in the centers where the acquired data is processed, or imaged, to create the most accurate, high-quality sub-surface images and reduce exploration risk. Cloud computing is changing every aspect of this work.

It has been over a decade since the IT departments of myriad industries around the world began employing cloud computing in earnest to improve business efficiency. But early cloud use was mostly confined to file sharing or carrying out large numbers of small data transactions, such as web-based banking, retail or gaming.

Hardware in The Cloud

Unlike ‘traditional’ industries that have used the cloud for these high volume, low-intensity individual transactions, seismic imagers utilize scientific high-performance computing involving extremely large data volumes and computationally intense algorithms. Additionally, the compute loads can vary throughout the course of a project due to significant swings in computing demands. Each algorithm may require varying demands on CPUs, GPUs, memory, network and storage.

Traditional seismic imaging software was also typically developed for specific hardware and not originally designed for the cloud. So, the industry did not take advantage of cloud-native technologies, variable ‘virtual’ machines and such. Many seismic companies also had an internal ‘inertia’, wishing instead to keep control of their hardware and carefully manage the cost-per-compute/hour. However, recent advances by cloud providers have reduced the cost-per-hour while providing significant flexibility including elasticity (expansion or contraction of hardware availability to meet business needs) and variable hardware (the right hardware for a specific algorithm). This flexibility has greatly changed the dynamics in the seismic imaging business.

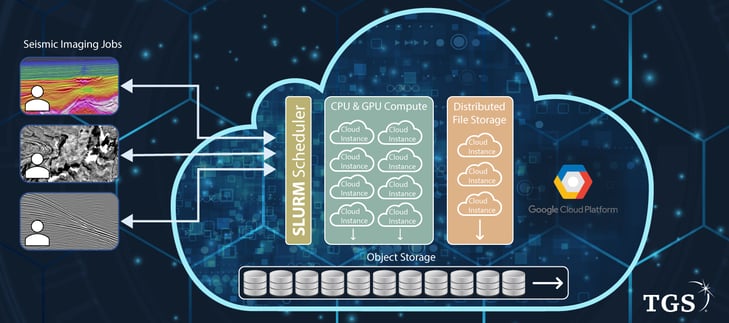

The cloud-based HPC Infrastructure utilized for TGS seismic imaging.

An Elastic Solution

The seismic imaging industry is always pushing for higher quality, utilizing more intense algorithms and/or parameterization for imaging, which in turn drives a need for higher computing requirements. However, it is not cost-effective to purchase hardware for peak demand only to leave it idle during lower demand periods. With fixed on-premise computing hardware, higher compute loads tend to translate to longer project turnaround times. Cloud computing offers a more elastic solution – with users only paying for the capacity they need, when they need it.

Seismic imaging entails the management of massive data volumes – sometimes petabytes - and complex algorithms that require weeks, or even months, to process on thousands to millions of compute nodes. Through the use of cloud services and, in the case of TGS, through a partnership with Google Cloud the company has, on individual projects, expanded its total computing usage to over three times on-premises capacity. This has resulted in projects comfortably meeting and even exceeding customer demands in both quality and turnaround time. As one example, the CPU utilization on a number of recent pre-stack time migration (PSTM) land-based projects has exceeded 200 percent of the total on-premises capacity for a single job. This led to a shortening of turnaround times for the project from weeks to days. Final Reverse Time Migration (RTM) processing has been reduced to between three and five days in the cloud, for an operation that might previously have taken 25-30 days on-premises.

With access to almost limitless computer capacity, TGS has been able to undertake additional activities that would have, hitherto, been prohibitive within financial and timescale limits. The company is now commonly expanding project specifications to higher frequency targets for its proprietary and highly successful Dynamic Matching Full Wave Inversion (DM FWI) solution in addition to RTM. This enables the company to offer customers additional image clarity and velocity modeling solutions.

Inherent Flexibility

With its cloud provider being responsible for supplying the IT infrastructure, TGS’ IT staff have been able to focus their attention on optimized cloud usage and other aspects of the company’s technology needs. Global cloud presence also allows the company to process data anywhere required. This, in turn, allows for employee and resource flexibility. The avoidance of data transfer restrictions promotes local content wherever in the world it is needed, enabling the company to bring people and data to the computing facility, virtually.

Cloud computing is also helping TGS to work towards its sustainability goals. The company has seen a reduction in its carbon footprint as the cloud data centers it utilizes run on 100 percent renewable energy.

TGS’ business is driven by the operators’ need for higher-quality imaging to impact exploration and development projects positively. This means processing must be faster, better, and more economical. As an asset-light business, cloud computing is changing the way TGS operates – offering a more flexible and cost-effective approach to its processing while also reducing project timescales.

This new approach is genuinely creating a seismic shift for TGS, its customers, and the industry.

Further Reading:

MBSS Offshore MSGBC Basin, Africa: info.tgs.com/jaan3d

MBSS Offshore Nigeria: info.tgs.com/2020-nigeria